Most of the explicit commands given throughout this tutorial assume a sh-like shell (bash, zsh, ksh etc). There are given in the form

% ls -l

If you are using tcsh (or csh), please adapt the examples accordingly in case there is a sntax difference.

~/GlobalPhasing/data/autoPROC/1o22_peak_images.tar.bz2

Distance: 200.0 BeamCenter X: 105.01 BeamCenter Y: 105.00 PhiDelta: 1.00 PhiStart: 90.00 FrameNumber start: 1 FrameNumber end: 90

Ideally one should run this tutorial in an empty directory, e.g. with

% mkdir -p ~/1o22 % cd ~/1o22

Then we want to copy the images over, which can be done with

% tar -xjvf /where/ever/1o22_peak_images.tar.bz2

Note: jobs will run faster if the images are located on a local disk. Often, a users HOME directory is a network filesystem - so this might not be a good location (not only sloing this autoPROC job down, but also everyone else that is using that same disk). If there is a large local disk available (e.g. /scratch or /tmp), we would recommend unpacking the files into a directory on that local filesystem. One can check the available size e.g. with

% df -h /tmp % df -h /scratch

Once we have the images, we can have a closer look at some basic information about them. For that we have the tool imginfo that reads the header information and produces a consistent output format for those items:

% imginfo *001.img

which returns

################# File = tm0875_8p44_1_E1_001.img >>> Image format detected as ADSC ===== Header information: date = 13 Oct 2002 18:47:43 exposure time [seconds] = 45.000 distance [mm] = 200.000 wavelength [A] = 0.977800 Phi-angle (start, end) [degree] = 90.000 91.000 Oscillation-angle in Phi [degree] = 1.000 Omega-angle [degree] = 0.000 2-Theta angle [degree] = 0.000 Pixel size in X [mm] = 0.102400 Pixel size in Y [mm] = 0.102400 Number of pixels in X = 2048 Number of pixels in Y = 2048 Beam centre in X [mm] = 104.900 Beam centre in X [pixel] = 1024.414 Beam centre in Y [mm] = 104.800 Beam centre in Y [pixel] = 1023.438 Overload value = 65535

We can see that the beam centre is recorded as exactly the mid-point of the image: this is slightly unusual, unless the detector is extremely well aligned on the beamline.

To get a detailed record of the sequence of data-collection we need to use the timestamp in the image header (the timestamp on the file could easily be messed up through copying):

% imginfo -v *.img | awk '/File/{f=$NF}/date/{print f,$0}'

which returns the list of images with their creation time:

tm0875_8p44_1_E1_001.img date = 13 Oct 2002 18:47:43 tm0875_8p44_1_E1_002.img date = 13 Oct 2002 18:48:36 ... tm0875_8p44_1_E1_015.img date = 13 Oct 2002 19:00:08 tm0875_8p44_1_E1_016.img date = 13 Oct 2002 19:27:40 tm0875_8p44_1_E1_030.img date = 13 Oct 2002 19:40:05 ... tm0875_8p44_1_E1_031.img date = 13 Oct 2002 20:07:33

Each image took about 50 sec to collect and after each block of 15 images there is a larger time-gap: this is because of the interleaved wavelength collection protocol.

Running

% imginfo -v *.img | awk '/File/{f=$NF}/Epoch/{print f,$NF}' | sort -n -k 2

will return the list of image sorted by time (epoch, i.e. seconds since 01.01.1970). A slightly shortened output looks like this:

tm0875_8p44_1_E1_001.img 1034534863 tm0875_8p44_1_E1_002.img 1034534916 ... tm0875_8p44_1_E1_015.img 1034535608 tm0875_8p44_1_E1_016.img 1034537260 ... tm0875_8p44_1_E1_030.img 1034538005 tm0875_8p44_1_E1_031.img 1034539653

If all images (for the three scans) were present in the current directory, this would show nicely the collection pattern for an interleaved wavelength scan.

The easiest is to run a command like

% process -d 01 >01.lis 2>&1 # sh/ksh/bash/zsh

or (if one uses csh/tcsh as shell)

% process -d 01 >& 01.lis # csh/tcsh

The above example (with all default settings) assumes that the images are in the same directory the 'process' command is started in. If this is not the case, two mechanisms are provided:

% process -I /dir/where/images/are -d 01 > 01.lis 2>&1

% process -Id lowRes,/data/images1,lyso_###.img,1,90 -Id highRes,/data/images2,lyso_high_###.img,1,180 -d 01 > 01.lis 2>&1

The basic assumption for a single autoPROC run (using the process command) is that all images used in that run have a clearly defined relation in terms of orientation. That means that those cases work:

whereas those wont't:

Furthermore, the relation between different scans need to be clearly defined through

Running

% process -d 01 > 01.lis 2>&1

will process all found images (in the current directory) with a reasonable set of defaults (we hope). There are two modifications that might be of interest:

% process -M fast -d 02 > 02.lis 2>&1

% process -M automatic -d 03 > 03.lis 2>&1

The first thing you'll see is some information about the way autoPROC was run (list of command-line arguments). There is a fairly lengthy paragraph about the beam centre: since this is very often the main reason for a failing processing run, it is explicitely mentioned here again.

A list of found scans is presented (image identifiers and range of images that make up each scan). In this example here, only one scan is present (peak wavelength of a Se-MET MAD experiment).

The first step consists of finding spots on a set of images. The default is to search for spots on all images available. Although this might seem excessive (and often is required for getting a successful indexing), there are various analysis steps that work much more reliably if a larger number of images were used for spot search. These include:

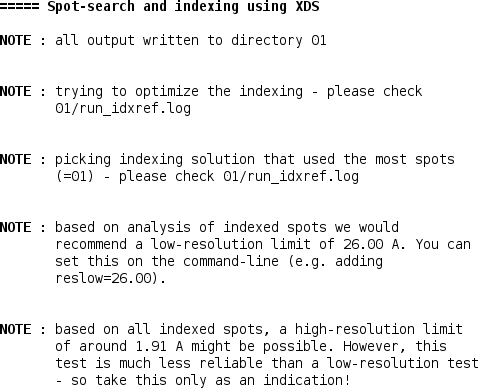

In this case, autoPROC decided that the initial indexing solution was not good enough to be used directly - mainly because the solution didn't use the majority of found spots. A procedure for improving that initial solution will be used (run_idxref tool), which can e.g. detect multiple lattices.

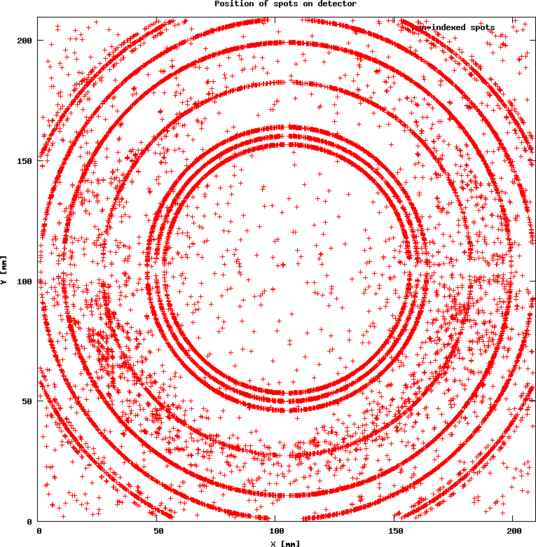

Here only 60% of all spots can be used for a successful indexing solution. What happened with the remaining 40%? After all, those nearly 16000 spots can't be used in a second round of indexing (which would be possible if there are multiple lattices due to non-merohedral twinning, split crystals etc). autoPROC plots the unused spots as a function of detector position (here:01/02_SPOTS.noHKL.png):

This shows clearly a large number of very strong ice-rings. Any *.png file can be visualised using the 'display' program:

% display 01/02_SPOTS.noHKL.png

Using the list of indexed spots, some predictions of likely low- and high-resolution limits can be made as well.

The default in autoPROC is to index in P1. The detailed analysis of the indexing results are given:

One can already see, that P1 might not be the finally correct spacegroup (two cell axes are nearly identical and the angles are very close to 90 degree). However, to do a final assignment of the most likely space group it is better to have a full set of integrated intensities available - so the following integration is till run in spacegroup P1.

If the user is very confident about the space group and cell, autoPROC can be run with

% process cell="58 58 102 90 90 90" symm="P43212" -d 02 > 02.lis 2>&1

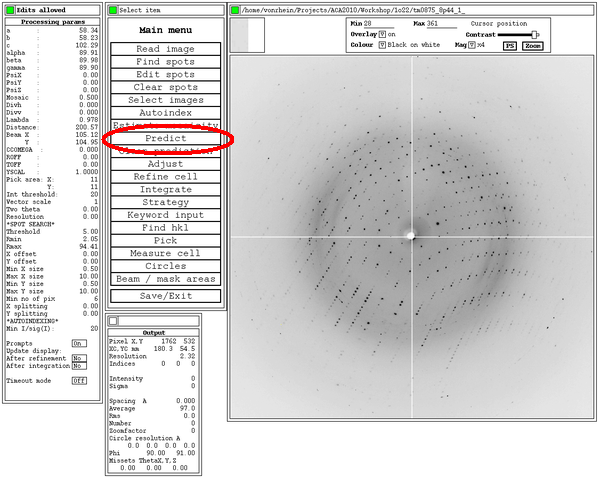

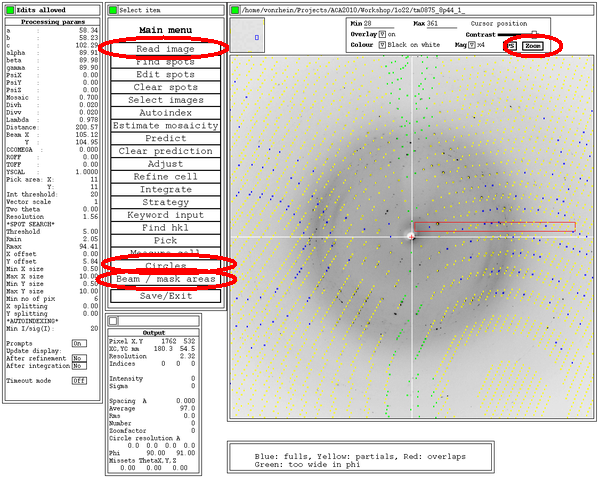

At this point, a little text file is prepared to allow visualisation of the current orientation matrix (and therefore predictions) using MOSFLM. This can be very useful to check if everything is working correctly - especially since XDS doesn't have a viewer to show (and modify) predictions:

% ipmosflm < 01/index_view.dat

This will start the (old) MOSFLM interface - so make sure to have a binary that still supports this interface.

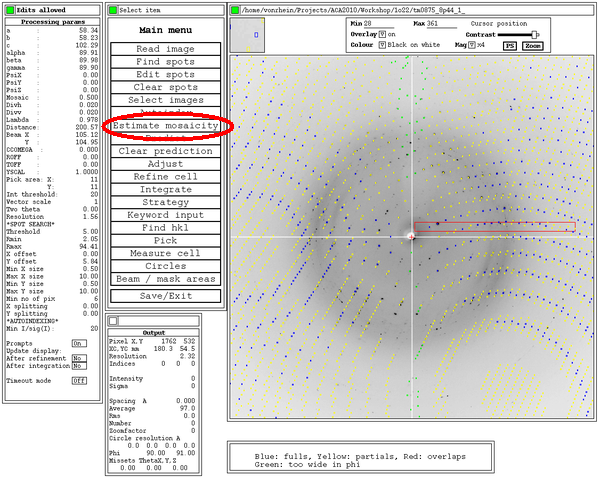

After hitting the 'Predict' button, the predictions given the current orientation matrix are shown: these should superimpose on actual spots . Ideally, all spots should have a prediction box around them: this depends on an accurate estimate of mosaicity though. After the initial indexing this value isn't yet available, but can be estimated from within MOSFLM:

Some additional tools within this MOSFLM interface could be useful, e.g.

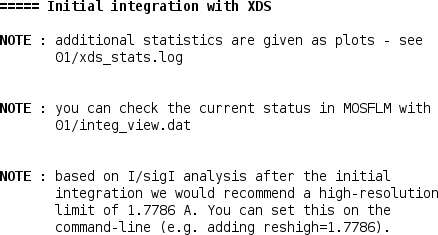

After the first integration (by default in P1), some additional plots are available for inspection:

% display 01/*.png

An updated file for visualisation using MOSFLM is also written.

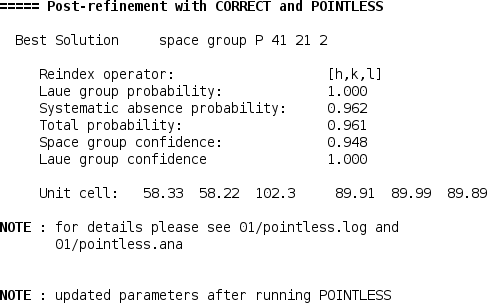

Since by default the initial integration was done in P1, POINTLESS is now used to determine the most likely space group:

This step can obviously not analyse screw axes if no (or insufficient) reflections along that axis were collected. Also, a distinction between enantiomeric space groups (P41212 versus P43212) is not possible at that stage - for that the structure solution step is usually required (density modification in case of experimental phasing or molecular replacement in both possibilities).

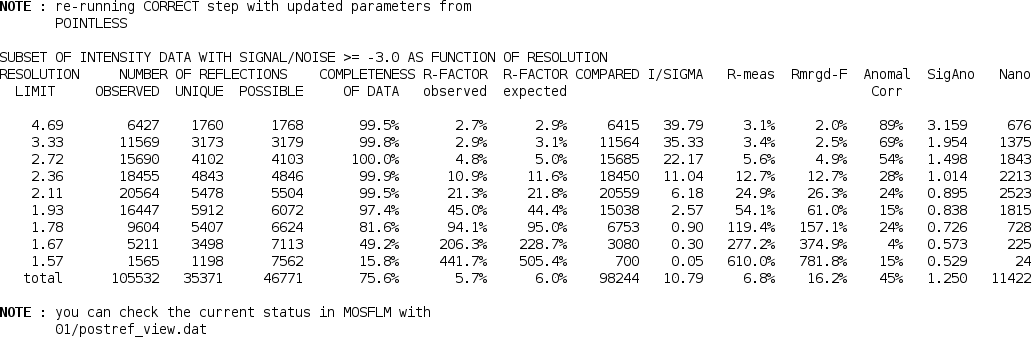

Using the determined space group, the final post-refinement step is repeated:

Now that the (hopefully) correct space group is know, the integration is repeated with these settings. At the end an updated table of statistics, a summary and an updated visualisation file are produced:

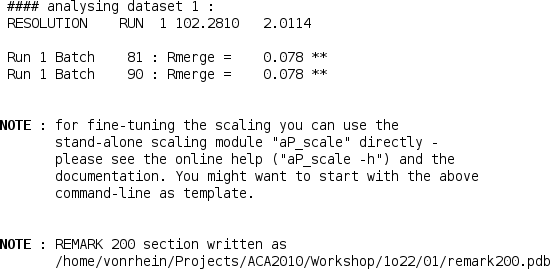

Now that a set of integrated intensities is available, they need to be scaled and merged with an appropriate high-resolution cut being applied. For that we use the SCALA program (through the "aP_scale" tool):

The scaling step can also be run by hand using the "aP_scale" tool - for more help just run

% aP_scale -h

To see some detailed information about the scaling step, the CCP4 "loggraph" utility can be used - e.g.

% loggraph 01/scala.log

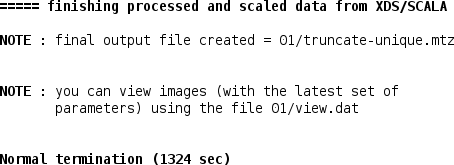

The merged intensities are then converted into amplitudes (and anomalous differences) with the CCP4 "truncate" program:

Details can be seen using

% loggraph 01/truncate.log

The final set of results will consist of